The cancellation events of the last decade followed a predictable script: A celebrity would commit a moral or ethical lapse, screenshots would surface, and the public would demand swift professional exile. Accountability, however messy, was the driving force.

In 2026, the engine of cancellation is no longer accountability; it is plausibility.

With Generative AI now capable of creating hyper-realistic, indistinguishable video and audio deepfakes, the foundation of every celebrity’s public image—the verifiable truth—has dissolved. The biggest threat to a career is no longer what you did, but what the AI can convincingly make the world believe you did.

We explore this terrifying new reality, why crisis management firms are panicking, and the two major deepfake attacks that have already permanently altered the landscape of celebrity defense.

The Shift: From Evidence to Denial

The standard playbook for a celebrity crisis is now obsolete.

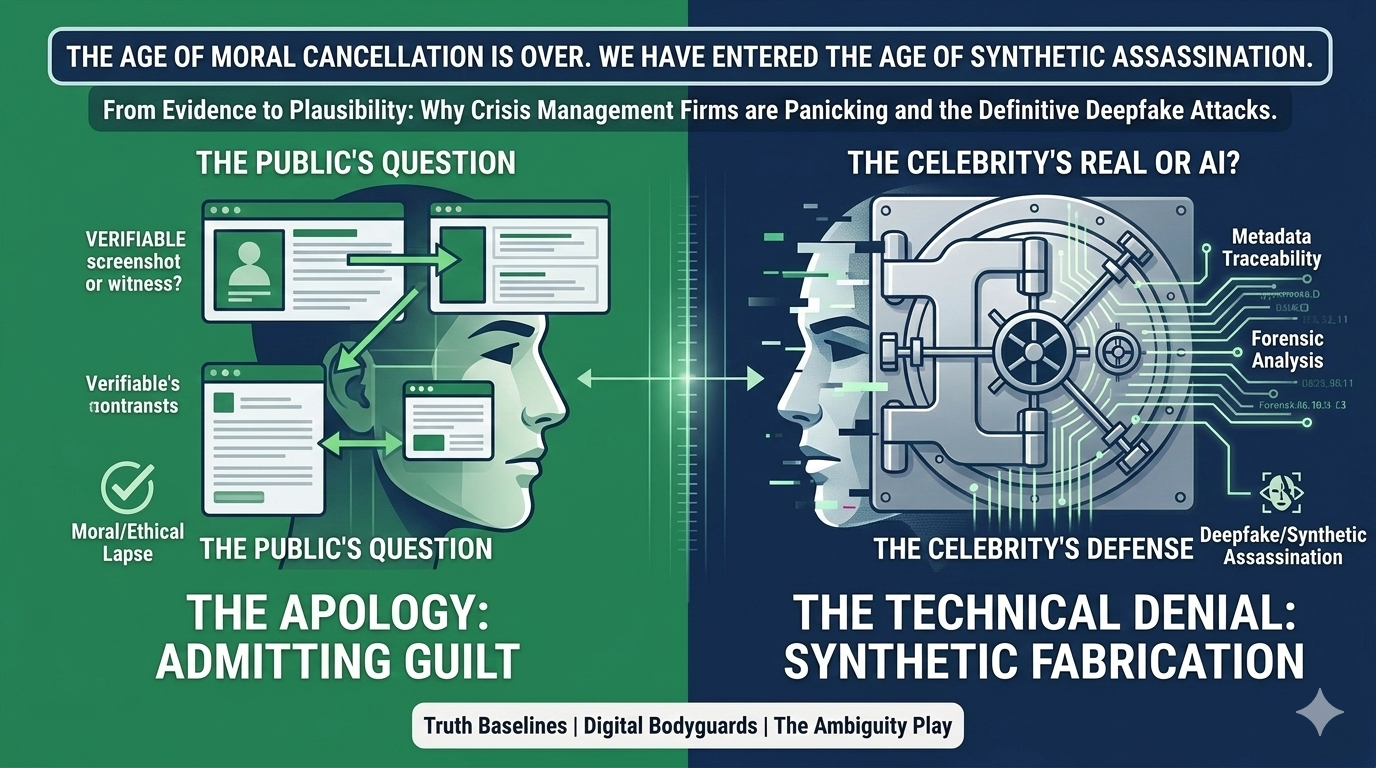

| Crisis Era | The Core Threat | The Public’s Question | The Celebrity’s Defense |

| Pre-2025 (Moral Crisis) | Verifiable screenshots or witnesses. | “Did they actually say/do that?” | The Apology: Admitting guilt and promising to do better. |

| 2026+ (Deepfake Crisis) | Undeniable, hyper-realistic video/audio. | “Is this video actually them, or AI?” | The Technical Denial: Claiming the content is a Synthetic Fabrication. |

The problem: Once a deepfake is viral, the damage is irreversible. The simple act of having to ask “Is it real?” means the celebrity has lost control of their narrative forever. Even with an eventual technical vindication, the public memory retains the scandalous image.

The Two Deepfake Attacks That Defined the Shift

Two recent, high-profile deepfake events have fundamentally changed how celebrity crisis management operates:

1. The Financial Scam (The SRK Case Study)

In a massive, global incident, a highly detailed deepfake video featuring a major global star (similar to the recent Shah Rukh Khan cases) began circulating, urging fans to invest immediately in a non-existent crypto scheme.

- The Attack: The video was visually perfect, matching the star’s voice, cadence, and typical presentation style. It was a direct, criminal attack on the star’s credibility and the fan base’s financial trust.

- The Damage: Though the star’s team issued immediate denials, thousands of fans lost money within hours. The star’s face is now permanently associated in the public sphere with a major financial scam, damaging their brand’s value in endorsements forever.

- The New Defense: The defense was not based on “I would never say that,” but on AI watermarking and metadata traceability. This is a purely technical fight, far beyond the public’s understanding.

2. The Private Scandal (The Voice Clone)

A high-profile actor was temporarily ‘cancelled’ after a 15-second audio clip went viral, allegedly catching him making extremely offensive and career-ending remarks on a private call.

- The Attack: The audio contained specific, verifiable background noises and verbal tics, making it feel hyper-authentic. It generated an immediate, universal boycott.

- The Defense Fight: The actor’s legal team did not debate the content of the remarks; they had to hire forensic AI audio analysts to prove the existence of voice cloning artifacts. It took 72 hours to get a conclusive report, but by then, the actor had already lost a major streaming deal.

- The Lesson: The new timeline for crisis management is zero hours. If you cannot debunk a deepfake within the first hour of virality, the career damage is done.

The Terrifying Future of Celebrity Defense

The solution is not better apologies; it’s better technology and more secretive preparation.

- “Truth Baselines”: Crisis teams are now creating “truth baselines”—private, timestamped video and audio recordings of their clients saying benign phrases and reading neutral scripts. These baselines contain the biometric markers (voice prints, facial micro-movements) necessary to train AI detectors to spot future fakes.

- Digital Bodyguards: Specialized AI tools now monitor the web for high-risk generative content featuring their client’s likeness. These systems initiate automated takedown requests based on biometric violations, not just copyright.

- The Ambiguity Play: The most cynical strategy is to foster an image that is already so ambiguous and ironic that any scandalous claim—real or fake—is simply seen as “part of the brand.” When everything is spectacle, nothing is scandalous.

The age of moral cancellation is over. We have entered the age of Synthetic Assassination. The only people truly safe are those who can prove, with technical certainty, that the evidence of their downfall is a lie.